Monday

19

January 2026

10:00 - 11:00 AM IST

Location

Room 002, School of Engineering and Applied Science

Central Campus

Share

Auditory-Motor Computations for Timing, Sequencing, and Learning

Arts and Sciences Research Seminar Series

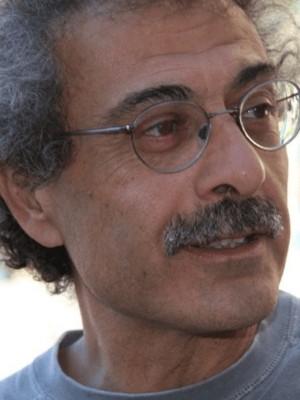

Shihab A. Shamma

Professor

University of Maryland, College Park

Speaker

Auditory perception is tightly coupled to action: we speak and sing with precise timing and continuously adapt our motor output using auditory feedback. This talk will discuss recent work on the auditory–motor loop as a computational system for prediction, sequencing, and control, emphasising how motor planning and movement-related signals shape auditory representations even when the primary task is “listening.” I will outline a framework in which the auditory cortex not only encodes acoustics, but also participates in sensorimotor inference—generating predictions about upcoming sensory input, comparing these predictions to feedback, and updating internal models that support learning and robust behaviour in noise.

We will present evidence and modelling ideas linking (i) fast auditory feature tracking, (ii) temporal expectation and rhythm, and (iii) feedback-based error signals to coordinated interactions among auditory cortical fields and motor-related regions. Finally, I will discuss implications for speech and music, and for NeuroAI/BCI applications where decoding and closed-loop stimulation can benefit from explicitly modelling perception–action coupling.

Speaker

Shihab A. Shamma

Professor Shihab A. Shamma is a Professor at University of Maryland, College Park and École Normale Supérieure, Paris. He also serves as an Adjunct Professor at IISc Bengaluru. He is an IEEE Fellow, recognised for influential contributions to the field. Professor Shamma’s research is widely regarded as foundational in auditory perception and auditory neuroscience, combining theory, neurophysiology, and computational/neuromorphic approaches to explain how the brain encodes and recognises complex sounds (including speech and music), and how attention, context, and learning-related plasticity shape auditory processing.